AI SEO for Multi-Location Businesses: The Complete 2026 Growth Playbook

AI SEO for multi-location businesses is the fastest, most scalable path to building, optimizing, and maintaining location pages, citations, and listings across dozens or thousands of storefronts. This guide combines automation strategy, entity data architecture, content frameworks, governance models, and KPI tracking so every branch you operate wins its own local SERPs — without your team drowning in manual work.

🤖 AI Automation

📊 Local SEO at Scale

🔍 2026 Playbook

Why This Matters Right Now

- Local packs and Google Maps drive the highest-intent, highest-converting traffic for physical and service-area businesses.

- AI collapses months of manual page creation, citation cleanup, and schema tagging into days — at any scale.

- Standardized entity data and structured markup are now prerequisite signals for AI-powered answer engines and assistants.

- Brands that deploy disciplined AI SEO systems today are compounding their local authority while competitors are still updating spreadsheets.

This playbook walks you through the complete strategy: what AI SEO for multi-location businesses actually means, why it outperforms traditional approaches, how to implement it step by step, which data fields and schema types matter most, how to govern AI outputs safely, and which KPIs tell you it’s working.

What Is AI SEO for Multi-Location Businesses?

AI SEO for multi-location businesses is the disciplined use of machine learning, natural language generation, and automation to create, enrich, and maintain location-specific content, structured data, and citation profiles at scale. The goal is to give every branch, franchise, or service-area location its own unique, search-optimized web presence — without requiring a human to hand-craft each page from scratch.

The “AI” component handles repeatable, data-driven tasks: drafting localized page copy from structured fields, tagging schema markup automatically, flagging citation inconsistencies, monitoring GBP performance anomalies, and generating FAQ variations tuned to local intent signals.

The “multi-location” dimension means you’re not just optimizing one page — you’re operating a network of location entities, each of which must rank, convert, and earn trust independently while also reinforcing the parent brand’s authority.

Direct Answer

AI SEO for multi-location businesses is the use of AI-driven automation to build and maintain location pages, Google Business Profiles, citations, and structured data so every branch ranks better, converts more visitors, and compounds local authority over time — without proportionally scaling your team’s manual workload.

Who Needs Multi-Location AI SEO?

This approach is essential for any organization operating or marketing in more than one geographic market, including:

- Franchise networks — 10 to 10,000+ locations sharing a brand but competing in different micro-markets

- Multi-site retailers — brick-and-mortar stores needing individual store pages that rank locally

- Regional service businesses — HVAC, dental, legal, financial, or home services operating across multiple cities or territories

- Healthcare and hospitality groups — clinics, hotels, or restaurants with dozens of properties each requiring unique, compliant content

- Real estate and property management firms — serving distinct neighborhoods and suburbs with varying inventory and pricing

If you’re managing SEO for more than five locations, manual processes become a competitive liability. AI changes that equation entirely.

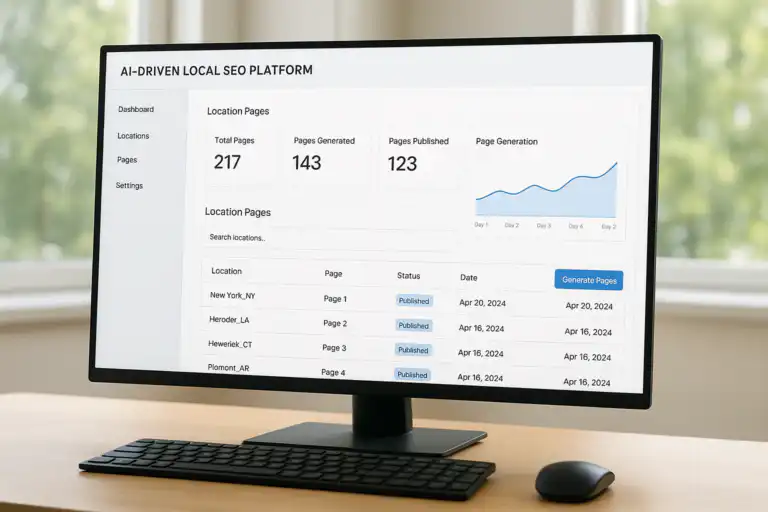

Programmatic page generation via AI SEO at enterprise scale — each location gets unique, structured content from a single governed data source.

Why AI-Driven Local SEO Wins for Distributed Brands

Traditional local SEO for multi-location businesses is a numbers problem. If you have 50 locations and each needs a well-researched, uniquely written page, a GBP optimized profile, consistent citations across 60+ directories, structured data, and ongoing monitoring — that’s thousands of individual tasks recurring every quarter.

AI breaks the linear relationship between locations and labor. Here’s exactly why it wins:

1. Consistent Entity Signals Across the Entire Footprint

Search engines — especially post-2023 Google — think in entities, not just keywords. AI helps you define and broadcast consistent entity relationships: brand → location → service → category → geo. When every page, every GBP, and every citation sends the same entity signals, Google’s confidence in your brand’s local authority compounds.

2. Speed-to-Publish for New Locations

When a new location opens, your SEO foundation should be live on day one — not three months later. AI-powered template systems can generate a fully structured, schema-tagged, GBP-ready location page from a dataset row in minutes. That’s a compounding competitive advantage for fast-growing brands.

3. Scalable Long-Tail Query Coverage

Each location is a doorway to hundreds of long-tail local queries: “[service] near [neighborhood],” “[service] in [city],” “[brand] [zip code].” AI can generate and optimize content for all of those variations across every location — a coverage footprint no human team can replicate manually.

4. Real-Time Anomaly Detection

GBP suspensions, hours mismatches, citation conflicts, and sudden ranking drops happen daily across large footprints. AI monitoring catches these in real time rather than in your monthly audit — and that speed difference can mean the difference between a one-day revenue dip and a three-week outage.

5. Competitive Intelligence at Location Level

AI tools can scan competitor GBPs, review sentiment, category usage, and content gaps at the individual market level. This means your team can make smarter decisions about where to invest SEO resources next — city by city, neighborhood by neighborhood.

“Local intent changes block by block. The winning play is standardized data with locally unique value — repeatable by AI, reviewed by humans, and always grounded in what a real customer in that market actually needs.”

The Core Challenges of Multi-Location SEO — and How AI Solves Each One

Before mapping out a solution, it’s worth being honest about what makes multi-location SEO genuinely hard. These are the real friction points brands face — and the AI-driven answer to each.

| Challenge | Why It’s Hard at Scale | AI SEO Solution |

|---|---|---|

| Duplicate content | Copy-paste location pages get filtered by Google, wasting crawl budget | AI generates unique intros, FAQs, and service descriptions from local data fields |

| NAP inconsistency | Name, address, phone mismatches across hundreds of directories destroy trust | Automated citation audits flag and correct conflicts at network level |

| Thin location pages | Pages with only address and phone number rank poorly and convert worse | Modular AI content adds reviews, FAQs, neighborhoods, landmarks, and services |

| Schema coverage gaps | Manual schema tagging for 100+ pages is error-prone and rarely consistent | Automated schema output directly from the location dataset, zero manual tagging |

| GBP management overhead | Updating photos, posts, hours, and Q&As across hundreds of profiles is unsustainable | Batch update tools and AI-drafted GBP posts scheduled and deployed programmatically |

| Review response delays | Slow responses hurt reputation and ranking signals in competitive local markets | AI drafts personalized responses at volume with human approval before publish |

| Reporting fragmentation | Combining rank, traffic, and GBP data for 50+ locations in one view is painful | Unified dashboards aggregate all location-level KPIs with automated anomaly flagging |

How to Implement AI SEO for Multi-Location Businesses: Step-by-Step

The implementation framework below is sequenced deliberately. Skipping ahead — especially deploying AI before your data is clean — is the single most common cause of scaled SEO mistakes. Follow the order.

-

Step 1: Centralize Your Location Dataset

Build a single source of truth for every location. This dataset is the engine that powers every downstream AI output. If the data is wrong here, every page, every schema block, and every citation will inherit those errors at scale.

Required fields: business name (exact), address (formatted to USPS standard), city, state, ZIP, phone, hours by day, latitude/longitude, GBP category (primary + secondary), services list, booking URL, UTM parameters, and coverage area polygons or city/zip lists.

-

Step 2: Map Your Entity Architecture

Define the entity hierarchy before any content is created. Your brand is a top-level entity. Each location is a child entity with its own ID, category, and geo-coordinates. Services are associated entities linking locations to intent signals. This map tells AI models how to write about each location in a way that reinforces the right entity relationships for Google’s Knowledge Graph.

Practical output: a JSON or spreadsheet that assigns a unique entity ID, canonical category, and parent-brand reference to every location.

-

Step 3: Design Modular Page Templates

Great location pages are built from modules, not monolithic copy. Design reusable sections with dynamic placeholders that AI fills from your dataset. Structure each template around the content types that drive conversions and rankings: local intro, services, service area, staff or team proof, reviews, directions, FAQs, and CTAs.

Recommended modules per location page:- 60–100 word local intro with entity mentions (brand + city + primary service)

- Services grid with benefit-focused bullets unique to that location’s offering

- Service area section naming neighborhoods, zip codes, or surrounding cities

- Directions block with parking, transit, and landmark references

- Review highlights module pulling location-specific ratings

- 3–5 FAQ items targeting hyper-local questions

- Conversion CTA with location-specific phone and booking link

-

Step 4: Generate Draft Copy with AI from Structured Data

Feed your location dataset fields directly into AI generation prompts. Each prompt should reference the specific city, services, hours, and coverage area for that location — this is what makes the output unique rather than generic. Include brand tone guides, restricted claims, and formatting rules inside every prompt as constraints.

Pro tip: Generate 3–5 variations of the intro and FAQ sections for each location, then have humans select or lightly edit the strongest one. This hybrid approach dramatically outperforms either fully manual or fully automated copy.

-

Step 5: Automate Schema Markup for Every Location

Schema is not optional at scale — it’s the structured language that tells AI-powered search engines and answer systems exactly what each location offers, when it’s open, and where it is. The most critical schema types for multi-location businesses are LocalBusiness (or its subtypes), Service, FAQPage, and BreadcrumbList.

Output schema automatically from your dataset. If the data is correct, the schema will be correct — no manual JSON-LD editing required. For Google’s eligibility requirements and technical specifications, refer to Google Search Central’s structured data documentation. For the broader standard, see the Schema.org reference on Wikipedia.

-

Step 6: Optimize and Synchronize Google Business Profiles

Your website location pages and your GBP profiles must tell the same story. Every field — name, address, phone, hours, categories, services, photos, and description — must match your canonical dataset exactly. Discrepancies between GBP and your website are among the most common and most damaging issues in multi-location SEO.

AI-assisted GBP tasks: batch category audits, automated Google Posts scheduling, AI-drafted Q&A responses, photo tagging and upload at scale, and review response drafting with brand voice controls.

-

Step 7: Build and Clean Your Citation Network

Citations — mentions of your business NAP on third-party directories — remain a meaningful local ranking factor. For multi-location businesses, the challenge isn’t building new citations but ensuring consistency at scale. AI can crawl your existing citation profiles, identify NAP conflicts, flag outdated information, and prioritize cleanup by citation authority and traffic potential.

Priority citation sources: Google Business Profile, Apple Maps, Bing Places, Yelp, Facebook, data aggregators (Data Axle, Neustar, Foursquare), and industry-specific directories relevant to your category.

-

Step 8: Publish, Interlink, and Architect Your Location Hub

Internal link architecture determines how PageRank flows through your location footprint. Build a hub-and-spoke model: a parent “Locations” or “Find a Store” page links to state or regional hubs, which link to individual city pages, which link to specific location pages. This hierarchy signals geographic scope, passes authority downward, and makes your footprint crawlable at depth.

Deploy in batches: roll out 20–30 pages at a time, monitor indexing performance, and iterate on templates before scaling to the full footprint.

-

Step 9: Monitor, Alert, and Iterate in Real Time

Scaled systems create scaled vulnerabilities. A single broken redirect template, a GBP category change, or an hours update that didn’t propagate correctly can silently hurt dozens of locations before anyone notices. Set up real-time SEO issue alerts that flag crawl errors, ranking drops, GBP suspensions, UTM mismatches, and citation conflicts as they occur — not at your next scheduled audit.

Data first → Templates second → AI third → QA always → Monitor continuously. Reversing this order is how brands end up with 500 pages of scaled thin content that tanks their entire domain.

Entity relationship graph showing how parent brand, location entities, services, and citations interconnect to signal local relevance to search engines.

Data Architecture: The Foundation Every Multi-Location AI SEO System Requires

The quality ceiling of your AI SEO system is set entirely by the quality of your location dataset. Garbage in, garbage out — but at scale, that becomes thousands of garbage pages indexed across your domain. This section defines exactly what your data model needs to contain.

Core Location Data Fields

- Legal business name — exact match to GBP, no keyword stuffing

- Street address — USPS-standardized, suite/unit noted separately

- City, state, ZIP — and county where relevant for service-area businesses

- Primary phone — location-specific (not a national 1-800 number)

- Hours of operation — by day, including holiday override logic

- Latitude / longitude — verified coordinates, not geocoded estimates

- GBP primary category — matched to Google’s official category list

- GBP secondary categories — up to 9 additional relevant categories

- Services offered — with short descriptions and pricing tiers where applicable

- Coverage area — named neighborhoods, zip codes, or city list for SABs

- Parking and accessibility notes — lot, street, ADA access

- Nearby landmarks — at least 2–3 recognizable references per location

- Staff or manager name — for E-E-A-T signals and personalization

- Review summary — average rating and count per platform

- Booking / contact URL — location-specific UTM-tagged link

- Photos inventory — interior, exterior, staff, products by location ID

Schema Types That Matter Most for Multi-Location AI SEO

Structured data is how you translate your location dataset into machine-readable signals that improve eligibility for rich results and AI answer extraction. For multi-location businesses, prioritize these types:

- LocalBusiness (or subtype) — the primary schema for each location page; use the most specific subtype available (e.g., DentalClinic, AutoRepair, LegalService)

- Service — associate services with the specific location offering them, not just the brand

- FAQPage — feeds AI answer engines and earns FAQ rich results in SERPs

- BreadcrumbList — reinforces your hub-and-spoke architecture for crawlers

- Review / AggregateRating — showcases location-specific review data in rich snippets

- OpeningHoursSpecification — enables hours display in local packs and maps

Generating these programmatically from your dataset ensures zero errors and 100% coverage across every location page without manual editing.

Content Frameworks: How to Keep Location Pages Unique at Scale

The biggest failure mode in multi-location content is what Google calls “doorway pages” — pages that exist only to capture a geographic keyword without providing genuine local value. AI makes it temptingly easy to produce thousands of these. The solution is a structured content framework that mandates locally specific proof at every page.

The Five Layers of Locally Unique Content

- Geographic specificity: Mention the specific city, neighborhood, district, or zone the location serves. Reference local landmarks, transit lines, or well-known nearby businesses to anchor the content geographically.

- Service differentiation: If different locations offer different services, hours, or pricing, those differences must be reflected. AI can surface these distinctions from your dataset automatically.

- Social proof: Pull location-specific review highlights, star ratings, and review counts. A page showing “4.8 stars from 312 reviews in Austin” is far more credible — and more rank-worthy — than a page with no review data.

- People and team: Where compliant with brand policy, reference the local manager or team. Named individuals on location pages are a strong E-E-A-T signal, especially in YMYL categories like health, legal, or finance.

- Practical utility: Directions, parking, transit stops, wheelchair access, and “what to expect on your first visit” content serve real user needs — and pages that serve real needs rank and convert better than those that don’t.

AI Prompt Engineering for Locally Unique Copy

The difference between generic AI copy and genuinely useful local content comes down to what you put into the prompt. Effective multi-location AI prompts include:

- The location’s full address and the 3 nearest major landmarks

- The primary and secondary GBP categories for that location

- The 3–5 services most commonly requested at that specific branch

- Current review volume and average rating

- Any seasonal demand patterns relevant to that climate or region

- Brand tone guide and a list of restricted claims or terms

A well-structured location page should have no more than 30–40% structural overlap with sibling location pages (shared headings, CTAs, and navigation). The remaining 60–70% should be derived from location-specific data fields. AI makes this achievable; templates without local data fields make it impossible.

Each storefront competes independently in its local market — AI SEO ensures every location has the optimized digital presence to win hyperlocal searches and drive in-store or service conversions.

Google Business Profile Optimization at Scale

Google Business Profile is not a secondary concern — for most multi-location businesses, it generates more qualified contacts than the website itself. In competitive local markets, the difference between rank 1 and rank 4 in the local pack is often a function of GBP completeness and activity, not just website authority.

AI-Assisted GBP Optimization Checklist

- Category audit: AI can cross-reference every location’s GBP categories against top-ranking competitors in that market and flag gaps or upgrade opportunities

- Description generation: AI drafts keyword-rich, brand-aligned GBP descriptions for every location using standardized prompts and local data fields

- Google Posts scheduling: AI generates weekly post content (offers, events, updates) tailored to each location’s services and local calendar

- Q&A population: AI seeds the GBP Q&A section with the most commonly asked questions and answers per location type — improving trust and preventing inaccurate public answers

- Review response: AI drafts personalized, on-brand responses to reviews — positive and negative — for human review and approval before posting

- Photo management: AI tags and schedules photo uploads by category (exterior, interior, team, product, service) ensuring each profile meets Google’s completeness benchmarks

GBP Suspension Prevention

Suspensions are a network-level risk. If your GBP management processes are not monitored and if guideline-violating edits go unchecked, a suspension pattern across multiple locations can trigger algorithmic scrutiny of your entire brand’s profiles. AI monitoring tools that flag unusual edits, keyword-stuffed name changes, or address modifications in real time are essential for large footprints.

Governance, QA, and Human Oversight: Non-Negotiables at Scale

AI amplifies whatever you give it. A governance failure at the template level or prompt level doesn’t produce one bad page — it produces hundreds or thousands of bad pages simultaneously. The brands that succeed with AI SEO for multi-location businesses are the ones that treat governance as a strategic function, not a bottleneck.

Four Pillars of AI Content Governance

- Template governance: All page templates must be version-controlled with documented change logs. Any template change is a network-level change. Require sign-off from SEO, brand, legal, and compliance before any template is updated and republished at scale.

- Prompt governance: Treat AI prompts like code. Store them in a central repository, version them, and test changes against a sample of 10–20 locations before batch processing. Include explicit prohibited-content guardrails (false claims, pricing guarantees, competitor mentions) in every prompt.

- Publishing governance: Establish tiered publishing rights. AI-generated drafts go to a review queue. Location managers review local accuracy. Brand team reviews tone and claims. Legal reviews any regulated-industry content. Only then does content publish.

- Monitoring governance: Assign named owners for monitoring dashboards at both the network level (brand team) and location level (regional managers). Define escalation paths and SLA timeframes for issue resolution.

Quality Assurance Checklist Before Publishing

- ✓ NAP on page matches GBP, schema, and citation network exactly

- ✓ Schema validates with no errors in Google’s Rich Results Test

- ✓ No duplicate paragraphs shared verbatim across sibling location pages

- ✓ All internal links resolve correctly (hub pages, breadcrumbs)

- ✓ CTAs link to location-specific URLs with correct UTM parameters

- ✓ Page loads in under 3 seconds on mobile (Core Web Vitals pass)

- ✓ Local proof elements present (reviews, photos, neighborhood references)

- ✓ No restricted claims or unverified statistics in AI-generated copy

A structured QA checklist is the guardrail that makes AI-driven location page automation safe, brand-compliant, and scalable without sacrificing accuracy.

AI SEO for Multi-Location Businesses: Pros, Cons, and Risk Management

Every team evaluating AI-driven local SEO should weigh the genuine advantages against the real risks — and understand the specific conditions under which each risk materializes.

| Advantages | Risks and Mitigations |

|---|---|

| Speed: New location pages live in hours, not months | Risk: Speed without QA propagates errors. Mitigation: Mandatory review queue, never skip for launch deadlines |

| Consistency: Schema, NAP, and brand voice standardized at scale | Risk: Template errors replicate everywhere. Mitigation: Version control, staged rollouts, rollback plan |

| Coverage: Long-tail query coverage impossible with manual teams | Risk: Thin content penalties if pages lack local depth. Mitigation: Data-rich templates with mandatory proof elements |

| Cost efficiency: 80–90% reduction in per-page production cost | Risk: Upfront data work and tooling investment is significant. Mitigation: Calculate break-even vs. manual team costs by location count |

| Real-time monitoring: Issues flagged as they occur, not monthly | Risk: Alert fatigue if thresholds are misconfigured. Mitigation: Tier alerts by severity, assign clear ownership |

| Competitive intelligence: Market-by-market gap analysis at scale | Risk: Acting on competitor data without strategic validation. Mitigation: Human review of AI-generated competitive insights before action |

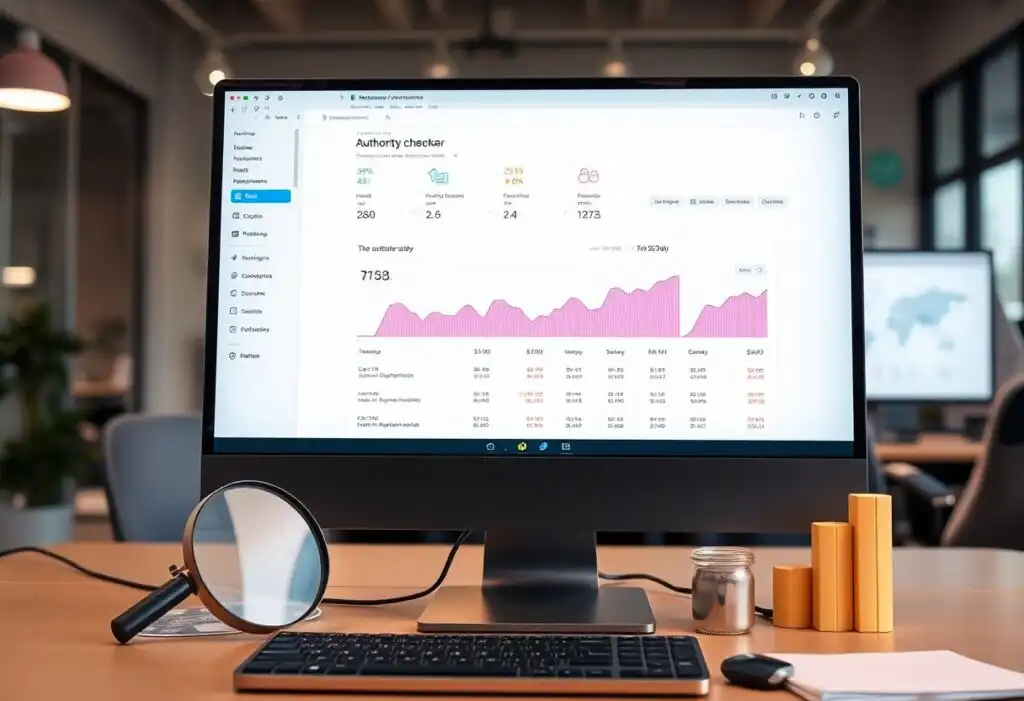

KPIs and Dashboards Every Multi-Location Brand Should Track

Measurement at the location level is what separates strategic AI SEO from a one-time campaign. The goal is a dashboard that shows you not just aggregate performance but which specific markets are winning, which are stagnating, and why — so you can direct resources intelligently.

Local Visibility KPIs

- Local pack rank — tracked by primary and secondary category per location, segmented by zip or neighborhood

- GBP impressions — views in search vs. views on maps, trending over 30/90/365 days

- GBP actions — calls, direction requests, website clicks, and booking clicks per profile

- Organic sessions to location pages — segmented by location, not just aggregate site traffic

- Organic CTR — from Google Search Console, by location page URL

Conversion and Revenue KPIs

- Conversion rate by location page — form fills, calls, bookings per 100 sessions

- Revenue per location attributed to organic — requires UTM architecture and CRM integration

- Cost per lead by channel and location — organic SEO vs. paid, by market

- Review velocity — new reviews per month per location, average rating trend

Technical Health KPIs

- Index coverage — percentage of location pages indexed vs. total published

- Core Web Vitals pass rate — especially LCP and CLS on mobile for location pages

- Citation accuracy score — NAP consistency across the citation network

- Schema validation errors — count of structured data issues per location from Search Console

- Crawl anomaly rate — 4xx errors, redirect chains, and broken internal links per location

Build three dashboard views: (1) Executive — revenue and pack rank by region; (2) Operations — GBP actions, review velocity, and citation health; (3) Technical — crawl health, index coverage, and schema errors. Each view should have automated anomaly alerts so stakeholders are notified without checking the dashboard daily.

Common Pitfalls and How to Avoid Them

These are the mistakes most teams make when deploying AI SEO for multi-location businesses for the first time — and the specific actions that prevent each one.

Pitfall 1: Scaling Before Validating the Template

Launching 500 pages before testing 20 is the most common cause of large-scale thin content issues. Always run a pilot: publish 10–20 pages, monitor indexing rates, check for duplicate content issues, and review actual user behavior before expanding. A failed pilot costs you nothing. A failed 500-page launch can cost you six months of recovery.

Pitfall 2: Using a 1-800 Number Across All Location Pages

A national phone number on all location pages destroys NAP uniqueness and prevents accurate attribution by location. Every location page needs its own direct phone number. Use call tracking numbers by location if you need analytics — but ensure the tracking number maps correctly to the canonical local number in your GBP and citation network.

Pitfall 3: Neglecting Internal Link Architecture

Isolated location pages — not connected to hub pages, not included in XML sitemaps, not linked from contextually relevant blog content — get crawled infrequently and rank poorly regardless of content quality. Your hub-and-spoke internal linking model is as important as the page content itself.

Pitfall 4: Treating GBP Optimization as a One-Time Task

GBP is a live, dynamic channel. Profiles with no recent posts, no Q&A activity, no new photos, and stagnant review velocity lose ranking ground to more active competitors. AI makes it feasible to maintain active GBP management across 100+ locations simultaneously — but only if it’s built into your ongoing workflow, not treated as a setup task.

Pitfall 5: Skipping the Review Strategy

Review volume, recency, and response rate are local ranking signals and conversion signals simultaneously. AI can assist with review request workflows, response drafting, and sentiment analysis — but the strategic decision of how many reviews you need per location to compete, and how to close that gap, requires human judgment and a clear acquisition playbook.

For additional strategic frameworks, review SEO secrets for business owners to complement your multi-location rollout with broader organic growth tactics.

AI SEO for Multi-Location Businesses: Frequently Asked Questions

How is AI SEO for multi-location businesses different from regular local SEO?

Regular local SEO can be done manually page by page. AI SEO for multi-location businesses introduces automation, entity data architecture, programmatic content generation, and real-time monitoring to make the same quality achievable across hundreds or thousands of locations simultaneously. The strategy is the same; the execution is systematized and scalable.

How many locations do you need before AI SEO becomes worth the investment?

The ROI inflection point is typically around 10–15 locations. Below that, a skilled human team can manage manually with reasonable effort. Above 15 locations, manual processes start creating gaps, inconsistencies, and missed opportunities that AI systems solve cost-effectively. For brands with 50+ locations, AI SEO transitions from an efficiency play to an absolute competitive necessity.

Will AI-generated location pages be penalized by Google?

AI-generated content is not inherently penalized by Google. Google’s guidance is clear: content is evaluated on helpfulness and quality, not the method of production. AI-generated location pages that are thin, duplicative, or lack genuine local value will underperform — but the same is true of manually written pages with those qualities. The key is using AI to produce content that genuinely serves users in each specific location, not to mass-produce keyword-stuffed doorway pages.

What’s the most important first step when implementing AI SEO for a multi-location brand?

Centralize and clean your location dataset before writing a single prompt or building a single template. Every downstream output — pages, schema, GBP content, citations — is derived from this data. If your dataset has inconsistencies, outdated hours, incorrect addresses, or missing service fields, those errors will be amplified across your entire location footprint at scale.

How does AI help with Google Business Profile management at scale?

AI assists with GBP at scale by automating category audits, generating and scheduling Google Posts, drafting Q&A content, creating review response templates tailored to each location’s voice and context, monitoring for unauthorized edits or suspension triggers, and batch-updating hours and service information across all profiles simultaneously.

What schema types are most important for multi-location businesses?

The highest-priority schema types for multi-location AI SEO are: LocalBusiness (or its category-specific subtype), Service, FAQPage, OpeningHoursSpecification, AggregateRating, and BreadcrumbList. These types directly improve eligibility for rich results in local packs, maps, and AI-powered answer systems. All should be generated programmatically from your location dataset to ensure accuracy and completeness at scale.

Quick-Start: 30-Day Launch Plan for AI SEO at Scale

If you’re starting from scratch or rebuilding an underperforming location footprint, this 30-day sequence gives you a structured path from data to live, monitored location pages.

Week 1: Data and Architecture

- Audit and consolidate location dataset

- Define entity hierarchy and IDs

- Audit existing GBP profiles

- Identify citation network gaps

Week 2: Templates and Prompts

- Design modular page template

- Build AI prompts with data fields

- Configure automated schema output

- Set up governance and review workflows

Week 3: Pilot and QA

- Generate and review 10–20 pilot pages

- Validate schema and internal links

- GBP sync and content updates

- Publish pilot set and monitor indexing

Week 4: Scale and Monitor

- Roll out remaining locations in batches

- Configure KPI dashboards and alerts

- Launch citation cleanup campaign

- Review pilot KPIs and iterate template

Answer-Ready Summaries for AI Search and Assistants

The following summary blocks are formatted for extraction by AI answer engines and voice assistants. Each answers a discrete, high-intent question about AI SEO for multi-location businesses.

AI SEO for multi-location businesses is the use of machine learning, automation, and structured data to create, optimize, and maintain location pages, Google Business Profiles, citations, and schema at scale — so every location in a brand’s network ranks competitively in its own local market.

- A clean, complete, and current location dataset as the single source of truth

- Governed AI templates and prompts that produce locally unique, brand-safe content

- Real-time monitoring with human oversight at every publishing and quality gate

Data first → Templates second → AI generation third → Human QA always → Real-time monitoring continuously. Every step out of this order increases the probability of scaled errors that are expensive to recover from.

Conclusion: Build a Location SEO Operating System That Compounds

AI SEO for multi-location businesses is not a campaign — it’s an operating system. The brands that win in local search over the next three to five years will be those that treat their location data as a strategic asset, their content templates as governed infrastructure, and their monitoring as a real-time competitive intelligence function.

The playbook is clear: centralize your data, map your entity architecture, build governed modular templates, deploy AI for draft generation and schema automation, synchronize your GBP network, clean your citations, and monitor everything in real time. Each location you optimize adds a compounding node to your brand’s local authority network.

The cost of waiting is not zero — it’s the gap between your rankings and those of a competitor who started six months earlier. Every day without a functioning AI SEO system for your location footprint is a day your competitor is adding reviews, earning citations, and accumulating ranking signals you don’t have.

Start with your data audit. Build your pilot set. Measure. Then scale. You can explore advanced tactics, case studies, and the full framework at rankauthority.com — and for additional SEO strategies that complement this playbook, see SEO secrets for business owners.

Ready to Scale Your Location SEO?

The next market leader will be the brand that treats local SEO as an AI-powered, data-driven operating system — not a manual checklist.

Start with your location data audit today. Every location you optimize is a compounding asset.